Read time: 9 minutes

Artificial intelligence (AI) is a rapidly growing field that has the potential to transform almost every aspect of society, from healthcare and transportation to education and entertainment. Recent developments in AI have garnered excitement in its potential and understandably generated commercial interest. However, while AI has been portrayed as the savior in movies such as “Wall-E,” in other works such as “Terminator,” “Ex Machina” and “Black Mirror,” the dangers and abuse of the technology highlighted signify the concerns in the public eye. Therefore, as AI becomes increasingly integrated into our lives, it is crucial that we establish foundational ethical principles that guide its development and use.

While there is currently no definitive agreed universal statement of what would constitute ethical principles for AI, we believe that several ethical principles should be considered when designing and implementing AI systems, including transparency, accountability, accuracy, auditability, privacy, fairness, safety, human centricity and inclusivity.

Transparency is an essential principle for AI because it allows users to understand how the AI system works and why and how it makes certain decisions. Without transparency, AI systems can seem mysterious or even untrustworthy, which can lead to confusion and mistrust. Additionally, transparency enables researchers to identify and correct biases in AI systems, which is essential for ensuring that they do not perpetuate discrimination or inequality. Therefore, AI developers should therefore be clear on what datasets are being used to train the AI, that AI its algorithms are available for review and reflect the other ethical principles.

AI systems should be designed to take responsibility for their actions, just like human beings. This means that AI systems should be transparent and explainable so that they can be audited and held accountable when they make mistakes or cause harm. Accountability also means that AI actors are responsible and accountable for the proper functioning of AI systems and for the respect of AI ethics and principles, based on their roles, the context, and consistency with the state of art.

Accuracy is the ability of the AI system to generate accurate results as the designers intended and not have intended consequences. To do so, AI systems should identify, log, and articulate sources of error and uncertainty throughout the algorithm and its data sources so that expected and worst-case implications can be understood and can inform mitigation procedures.

Auditability. In order to effect accountability and to demonstrate transparency, AI systems must feature auditability to enable interested third parties to probe, understand, and review the behavior of the algorithm through disclosure of information that enables monitoring, checking or criticism.

Explainability. Being able to explain how the AI system works to generate its results removes room for doubt. Developers of AI systems should ensure that automated and algorithmic decisions and any associated data driving those decisions can be explained to end-users and other stakeholders in non-technical man-in-the-street terms.

Privacy is another fundamental ethical principle that should guide the development of AI systems. AI systems must be designed to respect the privacy of individuals and protect their personal data, as already required by law. As AI systems become more prevalent in everyday life, it is essential to ensure that they do not infringe upon individuals’ privacy rights from the outset as once AI systems have accessed personal data, undoing it is extremely difficult, if not impossible. Privacy demands that users maintain control over the data being used, the context such data is being used in and the ability to modify that use and context.

Fairness is also an essential ethical principle for AI. AI systems should be designed to treat everyone equally and without bias. This means that AI systems should be trained on diverse data sets that represent different demographic groups and should be monitored to ensure that they do not perpetuate discrimination or inequality. AI developers should ensure that algorithmic decisions do not create discriminatory or unjust impacts across different demographic lines (e.g. race, sex, social economic status.). We should seek to develop and include monitoring and accounting mechanisms to avoid unintentional discrimination when implementing decision-making systems and strive to consult a diversity of voices and demographics when developing systems, applications and algorithms.

Safety is another critical ethical principle for AI. AI systems must be designed to ensure that they do not pose a risk to human safety or the environment. This includes designing AI systems that are secure and cannot be hacked, as well as ensuring that they do not cause harm to humans or the environment. The overriding principle must be that AI system implementation must create value which is materially better than not engaging in that project.

Human centricity and well-being. Humans must be at front and center of AI systems. The design, development and implementation of technologies must not infringe internationally recognized human rights and is inclusive which is accessible to as wide a population as possible. To this end, it should aim for an equitable distribution of the benefits of data practices and avoid data practices that disproportionately disadvantage vulnerable groups. In addition, it should aim to create the greatest possible benefit from the use of data and advanced modelling techniques. AI developers should engage in data practices that encourage the practice of virtues that contribute to human flourishing, human dignity and human autonomy. They should give weight to the considered judgements of people or communities affected by data practices and be aligned with the values and ethical principles of the people or communities affected. Then, they should design AI systems to make decisions that cause no foreseeable harm to the individual, or at least minimize such harm (in necessary circumstances, when weighed against the greater good).

Bias. One of the most significant ethical concerns surrounding AI is bias. AI systems are only as objective as the data they are trained on, and if the data is biased, then the AI system will also be biased. This is particularly problematic when it comes to AI systems that are used to make decisions about people’s lives, such as hiring or loan approval. Like prejudice, bias is an uncomfortable topic to discuss.

To address bias in AI, researchers must work to ensure that AI systems are trained on diverse data sets that accurately represent different demographic groups. Researchers should incorporate techniques, like reinforcement learning from human feedback, that help to reduce bias. Additionally, AI systems must be designed to identify and correct biases when they arise.

Job displacement. Another “hot-potato” ethical concern surrounding AI is job displacement. As AI becomes more advanced, it has the potential to replace human workers in certain industries, which could lead to widespread unemployment and economic instability.

To address job displacement, policymakers and business leaders must work together to ensure that the benefits of AI are distributed fairly across society. This could involve implementing policies that promote job training and re-skilling for workers whose jobs are at risk of being automated.

Finally, there is the ethical concern of AI governance. As AI becomes more integrated into society, it is essential to establish a regulatory framework that governs its development and use. This includes establishing standards for transparency, accountability, privacy, fairness, and safety, as well as developing mechanisms for auditing and enforcing these standards.

- AI has the potential to be saviour or foe

- Ethical principles should be considered when designing and implementing AI systems

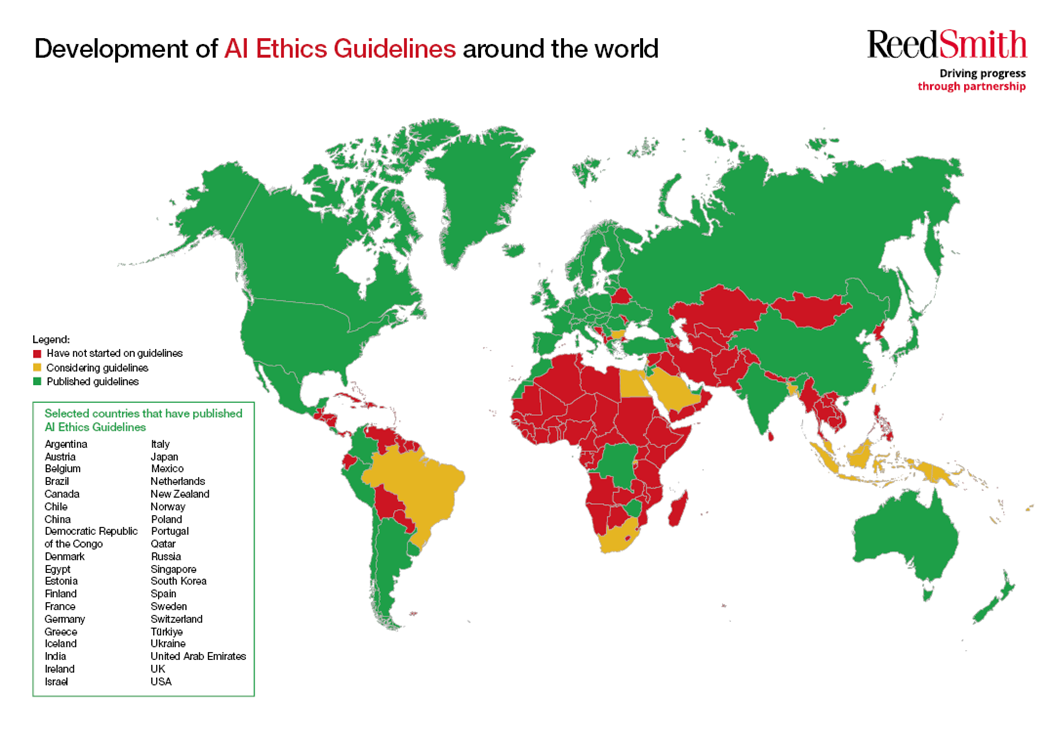

- Jurisdictions are applying ethical principles to AI in various ways